A year and a half ago we started integrating AI tools into our development workflow across Python, Django, React, Flutter, and .NET projects. This is an honest account of what changed — specifically around boilerplate and code review, which are the two areas where the impact has been most concrete.

Not a productivity manifesto. Just what actually happened.

The boilerplate problem

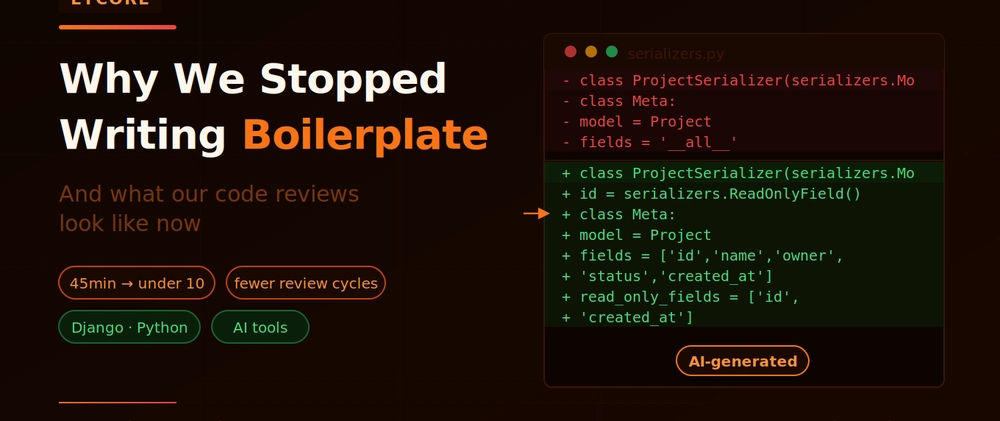

Before AI tools, setting up a new Django app involved a lot of typing that every senior engineer on our team had done hundreds of times. Serializers, viewsets, URL routing, permission classes, filter backends, initial migrations — none of it is hard, but it takes 30–45 minutes of focused work that adds zero intellectual value.

We now generate that scaffolding in under two minutes. Here is roughly what a typical prompt looks like:

Create a Django REST Framework setup for a Project model with the

following fields: name (CharField), owner (ForeignKey to User),

status (choices: draft/active/archived), created_at, updated_at.

Include:

- ModelSerializer with read-only id, created_at, updated_at

- ViewSet with list, retrieve, create, update, partial_update

- IsAuthenticated permission

- Filter by status and owner

- URL routing The output is accurate, follows our patterns, and is ready for a senior engineer to review structurally before anything is built on top of it. That last part — "ready for a senior engineer to review structurally" — is the rule we follow. AI writes the skeleton. A human checks the architecture before it becomes load-bearing. This stops structural mistakes from propagating through the codebase.

What changed in code review

This is the more interesting shift.

Before AI tools, our code reviews caught a mix of things:

Obvious mechanical issues: missing null checks, inefficient queries, typos in variable names

Structural concerns: wrong abstraction level, coupling that shouldn't exist

Business logic errors: misunderstood requirements, missing edge cases

Security issues: missing validation, exposed data in serializers

AI handles the first category well. We now run Claude over every PR before it goes to human review, with a prompt along these lines:

Review this Django code for:

- N+1 query problems

- Missing null/empty checks

- Serializer fields that expose sensitive data unintentionally

- Missing error handling in API views

- Any obvious security concerns

Code:

[paste diff]

It catches N+1 queries reliably. It spots missing select_related and prefetch_related calls. It flags serializer fields that probably shouldn't be writable. It notices when an exception is swallowed silently.

This means our human reviewers spend almost no time on mechanical issues. They spend their time on the things AI is not good at: whether the abstraction is right, whether the business logic matches the actual requirement, whether this code will be maintainable in 18 months.

The rule about tests

One thing we learned the hard way: never let AI write both the implementation and the tests for the same code.

If you do this, the tests pass — but they test what the code does, not what it should do. You end up with 100% coverage on the wrong behaviour.

Our rule: tests are written against the specification, not against the implementation. We write test cases from requirements first (even just as comments describing what each test should verify), then use AI to fill in the test code. The human wrote the spec for the test. The AI wrote the boilerplate of the test function.

This produces test suites that actually catch regressions rather than just confirming that the code runs.

What the numbers look like

We are careful about overstating productivity claims — the research on this is genuinely mixed and highly context-dependent. But across our team over the past year:

New module setup time is down significantly. What took 45 minutes now takes under 10, including review.

Code review cycles are shorter. Pre-review AI checks eliminate a full round of comments on mechanical issues in most PRs.

Documentation coverage is higher. We generate initial API docs and changelog entries with AI after each sprint. This used to get skipped under time pressure.

The gains are real. They are also concentrated in specific task types. Complex architectural decisions, novel integrations, debugging subtle race conditions — AI adds overhead on these, not speed. Knowing which is which is the actual skill.

What we still do entirely by hand

Security-critical code. Authentication systems, payment processing, access control logic, data encryption — we write these carefully, review them carefully, and do not rely on AI-generated implementations. Research shows a measurable increase in security vulnerabilities in AI-assisted code. We take that seriously.

Novel architecture. When a project requires something that isn't a well-worn pattern — a custom multi-tenant data model, a real-time system with unusual constraints — AI suggestions tend toward generic solutions. These decisions need human judgment and experience.

Anything that requires understanding the client's actual business. AI does not know why a field is named the way it is, what the edge case in the legacy data means, or why a particular design decision was made three years ago. That context lives in engineers' heads and in Slack history.

The honest summary

AI tools made our team faster on the tasks where they work well. They did not change what good engineering looks like — they just removed some of the friction in getting there.

If you are adopting AI tools on your team: spend the time figuring out exactly where in your workflow they add value and where they add overhead. The teams seeing real gains are the ones who made that distinction deliberately, not the ones who turned on Copilot and hoped for the best.

Lycore is a custom software development company. We build with Django, React, Flutter, and .NET — and we have been integrating AI tools into our workflow since they became production-ready. Questions or thoughts? Drop them in the comments.

Top comments (0)