How autonomous AI agents can generate a complete architecture snapshot of your microservices platform - while you do push-ups - and why that documentation becomes the most powerful input for your AI-driven quality pipeline.

TL;DR

Architectural documentation is not a chore. When colocated with your source code and fed into an AI-powered quality pipeline, it transforms static analysis from "catching typos" into "catching systemic security failures and costly infrastructure leaks." This article documents a real experiment where an autonomous AI agent generated architecture files across a multi-service Google Cloud platform - with the human engineer largely off-screen - and what happened when that documentation gave our AI Quality Gate an entirely new perspective.

1. The "Self-Documenting Code" Problem

There is a persistent assumption in software engineering that well-structured code is self-explanatory. Clean functions, good variable names, and a Pylint score of 10.0/10 - surely that's enough?

It is not.

Code describes how a system executes. Architecture documentation describes why a system exists and how it interacts with everything around it. Without this context layer, every automated analysis tool is operating in the dark. It sees a function, but not its role in the broader service mesh. It sees an API call, but not the security boundary it is expected to enforce.

This distinction matters enormously when you introduce AI-powered tools into your engineering workflow. An LLM analyzing raw code without architectural context is like asking a senior engineer to perform a security review without access to the system design.

2. Generating Architecture While Doing Push-ups

My platform runs on Google Cloud. It consists of dozens of microservices deployed on Cloud Run, interacting via REST APIs, persisting assets to Google Cloud Storage, and routing all AI operations through a centralized Vertex AI gateway. A rich, well-connected system - but one where the only documentation was spread across scattered README files.

I set out to change that. The goal: a standardized, machine-readable architectural snapshot for every service, committed directly to the repository.

The method: guided autonomous agent execution.

The engineer set a direction, established the documentation standard, and then stepped back. The AI agent - powered by Gemini 3 Flash and Claude Sonnet 4.6 running inside Antigravity, an agentic AI coding assistant - took over. It autonomously inspected each service, read the source code, traced inter-service dependencies, cross-referenced existing implementations against the documentation standard, and iteratively generated structured ARCHITECTURE.md files. The engineer's main activity during most of this process was physical exercise.

The output was not informal notes. It was a disciplined, multi-level documentation hierarchy:

📦 platform-root

┣ 📜 ARCHITECTURE.md ← Level 0: Global service mesh, topology, lifecycle status

┗ 📂 services

┣ 📂 core-ai-gateway

┃ ┗ 📜 ARCHITECTURE.md ← Level 1: Security policy engine, FinOps guardrails

┣ 📂 orchestration-bot

┃ ┗ 📜 ARCHITECTURE.md ← Level 1: Async task flow, Telegram webhook handling

┣ 📂 media-transcriber

┃ ┗ 📜 ARCHITECTURE.md ← Level 1: Speech-to-Text pipeline, GCS asset management

┗ 📂 translation-engine

┗ 📜 ARCHITECTURE.md ← Level 1: Structured output, multilingual routing

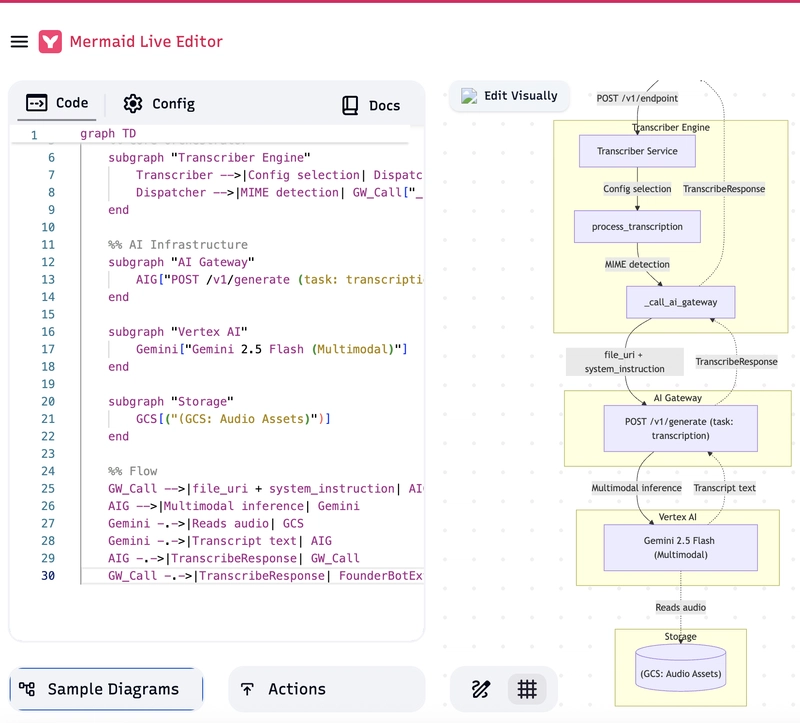

Each document followed a strict template:

- Intent: The concrete business and technical reason this service exists.

- Design Principles: Key trade-offs - statelessness, latency targets, fallback strategies.

- Interaction Diagram: A Mermaid graph of service-to-service flows, security boundaries, and AI provider integrations. It may be generated by the agent and automatically drawn in Gitlab.

- LLM Context Block: A precise summary optimized for consumption by automated agents and AI reviewers.

The entire operation resulted in a navigable, cross-linked architecture map - built with minimal human cognitive effort (and with visualizations!)

3. The Quality Gate Awakening

Once the documentation was committed alongside the source code, I ran a standard CI quality review using our AI-powered Quality Gate - a service built on top of Gemini via Vertex AI, designed to perform automated architectural and security reviews on every merge request.

💡 What is the Quality Gate, exactly?

It is not a $100,000 enterprise SaaS platform. It is a lightweight, purpose-built microservice - part of the same platform it reviews - deployed on Google Cloud Run. It exposes a single endpoint, receives the merge request diff from the CI pipeline, constructs an LLM prompt enriched with the repository's architectural documentation, calls Vertex AI (Gemini), and returns a structured JSON review report.Because it runs on Cloud Run, it starts only when a review is triggered and shuts down immediately after. The total monthly cost for me is a few dollars - a fraction of a single human code review hour. This is a practical demonstration of the Google Cloud serverless model: pay only for the compute you actually use, and use high-intelligence AI only when it adds value.

The difference was immediately visible.

Previously, without architectural context, the Quality Gate was limited to code-level analysis: style consistency, common security anti-patterns, dependency versions. Useful, but shallow.

With the ARCHITECTURE.md files available as context, the model could see the architecture and the code simultaneously. The result was a qualitative leap: the Quality Gate shifted from a static analysis tool into a reasoning system operating at the level of system design.

It identified two critical issues within minutes - issues that had existed undetected in the codebase for months.

Finding 1: The Distributed Tracing Blackout

One of our routing services included middleware that explicitly stripped incoming trace headers. On the surface, this looked like a reasonable security measure to prevent external clients from injecting trace identifiers into internal systems.

The Quality Gate identified it as a critical observability violation.

Because the architectural documentation described the distributed tracing standard across the mesh - including the requirement for end-to-end X-Trace-ID propagation compatible with Google Cloud Trace - the model understood that stripping these headers at the boundary did not isolate a threat. It severed the trace chain entirely. In any production incident, engineers would be unable to correlate logs across services in Cloud Logging, turning a routine debugging session into a multi-hour forensic investigation with no Cloud Audit Logs correlation to lean on.

Security intention ✓. Systemic consequence ✗. The documentation made this contradiction visible.

Finding 2: The Silent Storage Leak

A media processing service was documented as intentionally skipping cleanup of temporary assets in Google Cloud Storage after each processing job. The rationale was implicit - simplicity, no failure modes from deletion errors.

The Quality Gate cross-referenced this against the documented architectural principle of data minimization and least-privilege access, and flagged it as both a security and FinOps violation.

The impact: user audio files - potentially containing sensitive personal information - accumulating indefinitely in cloud storage. No lifecycle policy. No deletion trigger. Silent, compounding cost growth. An expanding attack surface with each new processing request.

Neither a linter nor a code reviewer scanning functions in isolation would have flagged either of these. Both findings emerged from the intersection of code behavior and architectural intent - visible only because the documentation existed.

4. The ROI Case

This experiment produced a measurable return on investment across three dimensions:

| Dimension | Without Documentation | With Documentation + AI Agent |

|---|---|---|

| Architecture Capture | Senior Architect hours | Agent cycle, near-zero human effort |

| Review Quality | Code-level findings | System-level and policy findings |

| Issue Discovery Cost | Post-incident or audit | CI/CD pipeline (minutes, pennies) |

| Quality Gate | Generic, rigid enterprise tool | Custom microservice, tunable per team or developer |

Three additional factors are worth noting specifically in the context of Google Cloud platforms:

Vertex AI Token Efficiency: When the Quality Gate is backed by a Gemini model, providing a structured

ARCHITECTURE.mdreduces the tokens the model spends reconstructing system intent from raw code. Better context means cheaper, faster, and more accurate generation - directly impacting your AI compute costs.Cloud Run Observability: The distributed tracing finding described above is particularly relevant for Cloud Run-based architectures, where services are stateless and ephemeral. Without continuous trace propagation, debugging inter-service failures on Cloud Run becomes significantly harder. The documentation made this risk explicit and catchable.

Serverless Cost Model: Because the Quality Gate is a Cloud Run service invoked only during CI/CD runs, there is zero idle cost. On a typical team with several merge requests per day, the entire AI-powered review pipeline costs a few dollars per month - less than a single engineering hour. This is the Google Cloud serverless model working exactly as intended: high-intelligence compute, on-demand, at minimal cost.

5. Lessons for Platform Engineers

The key insight from this experiment is not that AI agents write documentation faster than humans. That is expected. The key insight is that architecture documentation living inside the repository is a force multiplier for every automated tool that reads it.

This applies whether your automated tools are AI-powered code reviewers, compliance scanners, onboarding assistants, or infrastructure planning agents. The better the documentation, the higher the signal quality of every tool operating on top of it.

Practical recommendations:

-

Colocate documentation with code. A separate wiki that drifts out of sync is noise. An

ARCHITECTURE.mdin the service directory, updated in the same commit as the code, is signal. - Establish a documentation standard. A consistent template (Intent, Principles, Interaction Diagram) makes documentation machine-readable, not just human-readable.

- Define a lifecycle status. Clearly mark deprecated or inactive services. Automated agents should not use legacy code as a reference for current standards.

- Use agents to generate the initial draft. The cognitive overhead of starting from a blank page is real. Agents are excellent at producing a structured first pass that engineers then validate and refine.

- Feed documentation to your CI pipeline. An AI quality reviewer with architectural context is a different class of tool than one without it.

- Build your own Quality Gate - and make it yours. This is the key advantage that enterprise SaaS cannot match: flexibility. A custom Cloud Run service backed by Gemini and driven by your compliance rules, your architectural standards, and your team conventions means every developer can have a personal reviewer that understands the exact context of the project - not a generic ruleset designed for the average of all possible codebases.

6. Conclusion

Architecture documentation has historically been treated as optional overhead - valuable in theory, deprioritized in practice. This experiment demonstrates that when documentation is colocated with source code, follows a consistent machine-readable standard, and is kept current with the help of autonomous agents, it becomes a critical infrastructure component.

It enables automated systems to reason at the level of platform design, not just code syntax. It transforms AI-powered quality gates from expensive linters into genuine architectural advisors. And it can be generated - for an entire platform - while you are doing something else entirely.

The $10,000 ARCHITECTURE.md is not a metaphor. It is the estimated cost differential between finding a critical architectural flaw in a 5-minute CI review versus discovering it during a production incident, a compliance audit, or a cloud storage invoice that nobody expected.

Keep your architecture documented. Keep it in the repository. Let agents maintain it.

Stay standardized. Stay secure.

Top comments (2)

Or just scan with vouch-secure and be sure 🤷♂️

Subject for research :)