Unit testing doesn't fail because developers don't care.

It fails because it breaks flow.

You write a function → you're in the zone → and now you're supposed to stop, switch files, and write tests.

So most of the time… you don't.

Most AI test generators fail in real projects for one reason:

they overwrite things developers care about.

I've spent 8+ years in QA, mostly in healthcare, and watched this pattern repeat across every team I worked with. Smart developers. Good intentions. No tests.

Most AI test generators try to fix this. But they introduce a new problem: they regenerate your entire test file. Your manual tests get overwritten. Your tweaks disappear. You stop trusting the tool.

If I don't trust it, I won't use it.

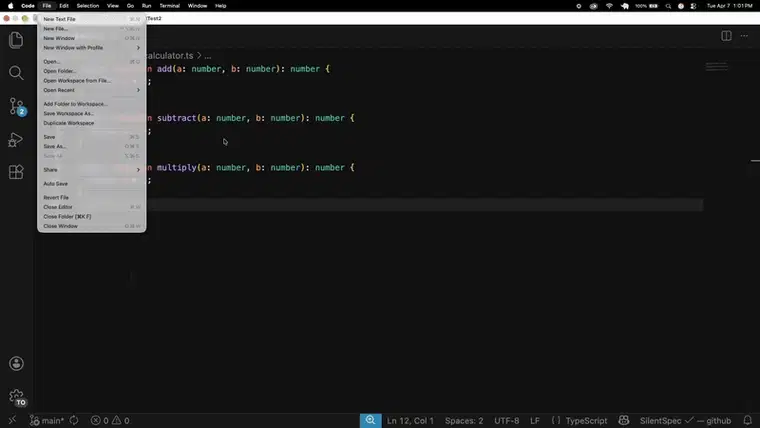

So I tried a different approach: what if tests were generated on save — but only for what's missing?

// You write this

export function add(a: number, b: number) {

return a + b;

}

Save → test appears. No commands. No sidebar. No context switch.

Notice this: when a new function is added, SilentSpec only generates tests for that function — it doesn’t touch existing ones.

That's SilentSpec — a VS Code extension that generates tests for JavaScript and TypeScript without rewriting your existing ones.

But the save trigger isn't the interesting part. The interesting part is what it refuses to do.

The core idea

SilentSpec only generates tests for functions that don't already have coverage.

Add one function → get one describe block. Nothing else changes.

The design constraint wasn't "generate good tests." It was "don't destroy anything the developer already has."

How it works

When you save a file, SilentSpec runs through this pipeline:

- AST analysis — parses your source, extracts exported functions and classes

- Marker reconciliation — checks which exports already have generated tests

- Gap detection — identifies only uncovered functions

- Targeted generation — sends only the uncovered functions (not the entire file) to your AI provider

- Compile check — runs a TypeScript compiler pass and removes tests that don’t compile

- Zone-based write — inserts tests into the correct section without touching anything else

The four-zone file

Every managed spec file has clearly separated ownership:

// == SS-IMPORTS (managed by SilentSpec) ==

import { add, multiply, subtract } from './calculator';

// == SS-USER-TESTS (yours — SilentSpec never modifies this) ==

describe('my custom edge cases', () => {

it('should handle floating point precision', () => {

expect(add(0.1, 0.2)).toBeCloseTo(0.3);

});

});

// == SS-GENERATED (managed by SilentSpec) ==

// @ss-generated covered: add, multiply, subtract

describe('add', () => {

it('should return the sum of two numbers', () => {

expect(add(1, 2)).toBe(3);

});

});

SilentSpec owns SS-GENERATED and SS-IMPORTS. You own SS-USER-TESTS. The boundary is never crossed.

What if you already have tests?

SilentSpec doesn't touch existing handwritten specs. It creates a companion file instead:

utils.ts

utils.spec.ts ← yours, untouched

utils.silentspec.spec.ts ← SilentSpec's managed file

Don't like it? Delete it. Your original is exactly where you left it.

Bring your own AI provider (BYOK)

You’re not locked into one provider.

SilentSpec works with GitHub Models (default), OpenAI, Claude, Ollama/vLLM (for fully local generation), and enterprise setups like Azure OpenAI, AWS Bedrock, Google Vertex AI, and OpenAI-compatible endpoints.

Your code goes directly to your provider. No intermediary server. No login. No telemetry. Your API key, your code, your machine.

Auto-detects Jest, Vitest, Mocha, or Jasmine from your project. Adapts mock strategies for NestJS, Next.js, React, Prisma, and GraphQL.

What I learned from real codebases

I validated SilentSpec against trpc, prisma, typeorm, and date-fns before publishing. Found 24 confirmed bugs and 4 research findings that shaped the current architecture. One example: a regex replacement in the import handler was destroying unrelated imports in files with complex re-exports — something that would never surface in a clean test project.

If you're trusting a tool that writes to your test files, you should know where it breaks.

What’s not perfect

Being honest:

- Only works on exported symbols

- Generated tests are compiler-checked, not semantically verified

- Frontend component testing is still basic

- Function renames regenerate tests

- Large files (>1500 lines) are skipped

Generated tests are a starting point.

Once you review them, SilentSpec won’t rewrite them.

What I'm looking for

I'm not looking for validation — I'm looking for ways this breaks.

What patterns in your codebase would fail here? Would you trust incremental test generation? What would stop you from using this?

The more edge cases I hear about, the better this gets.

If you want to try it, links below.

Built by a QA engineer who got tired of watching teams ship without tests. If something breaks, open an issue — I want to know about it.

Top comments (1)

Curious how people feel about this.

If AI-generated tests didn’t overwrite your existing tests, would that change whether you’d use them?

Or is the idea still too risky?