It's been a while since I've been able to get into this again - sometimes life just throws things your way. However, I am happy that the new chunk of my journey into machine learning has been so satisfying!

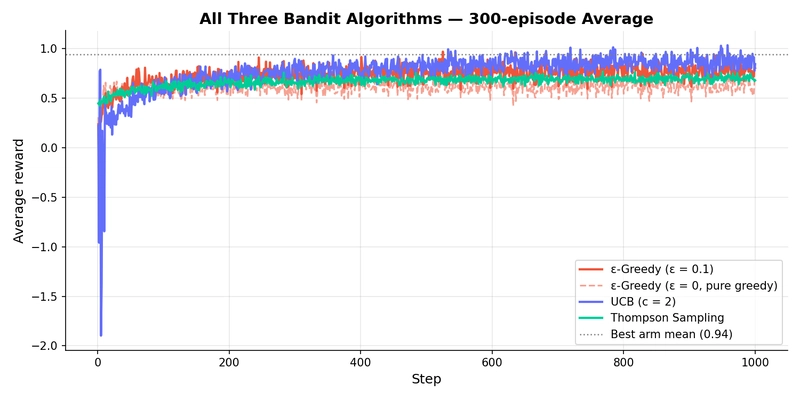

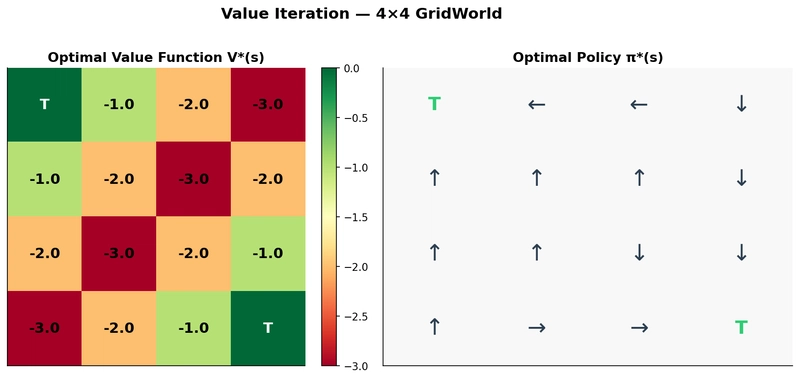

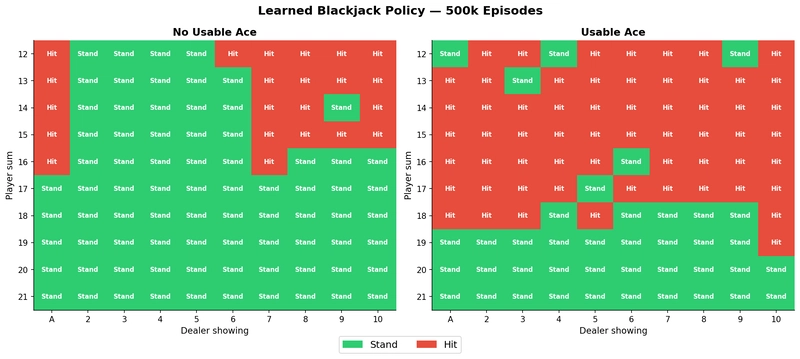

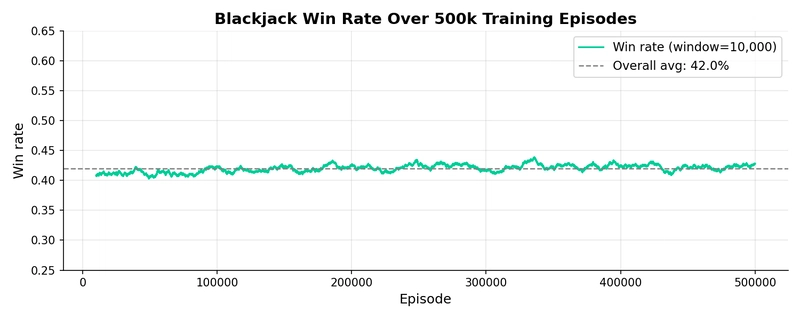

I've been working through Sutton & Barto's Reinforcement Learning: An Introduction and, if I'm honest, some of the maths took a while to click. Bellman equations, policy iteration, Beta posteriors — on the page they're coherent, but building a real intuition for why things behave the way they do is a different challenge entirely.

So I did what I always do when something isn't sticking: I got my hands dirty.

I built an interactive Streamlit app that implements the algorithms from scratch — no RL libraries, just NumPy — and lets you adjust parameters and watch what happens in real time. Nine pages covering bandits, dynamic programming, and Monte Carlo methods, with more to come as I work through the rest of the book.

Once it started helping me, I figured it might help someone else too. So I deployed it and put it online.

I'll keep adding to it as I finish the book — there's already a Summary page that accumulates plain-English notes on each concept as I work through it, and TD methods, function approximation, and policy gradients are all on the list.

One thing I'll say: Streamlit makes it remarkably easy to turn an algorithm into something legible and explorable. If you need proof, here are a few screenshots — but honestly, you should just go and have a go yourself.

Built this in (delayed) Week 4 of my transition from operations manager to ML engineer. One concept at a time, one visualization at a time.

Top comments (0)