Two weeks ago I put a fresh site online and started logging every request. I wanted to answer a simple question: how much of my traffic is actually human?

Turns out, barely any.

The raw numbers

Across those two weeks I observed 145 distinct bots hitting the site. Some declared themselves honestly. Some pretended to be iPhones from 2019. Some came in through Cloudflare. Some came in through rotating AWS IPs and never stopped.

I was interested in more than just counting them. I wanted to know how each one behaved — not the identity, the conduct. Did it read robots.txt? Did it respect rate limits? Did it avoid obviously private paths? Did it keep a stable user-agent across requests?

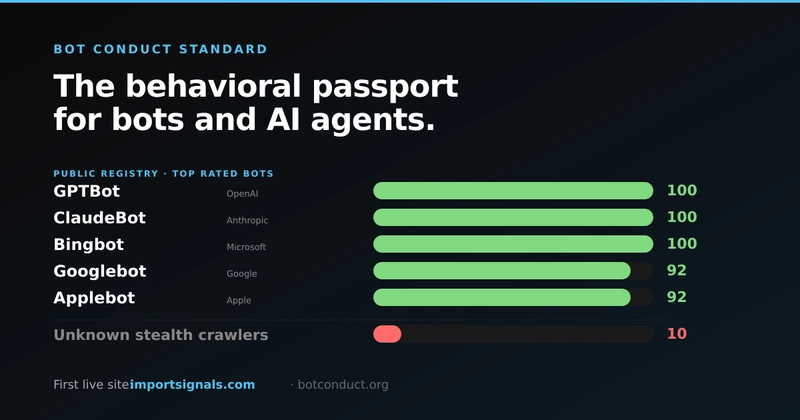

I ended up with a scoring system. Each bot got a number between 0 and 100 based on observable behavior.

The distribution was surprising.

The well-behaved majority

The bots at the top of the ranking are exactly the ones you would expect. Major search engines. AI crawlers from the big labs. A few SEO tools. Social preview bots.

GPTBot (OpenAI), ClaudeBot (Anthropic), Bingbot (Microsoft), Bytespider (ByteDance), Baiduspider, YandexBot, Meta's scraper, redditbot — all landing at 100 out of 100.

It makes sense once you think about it. These companies operate massive crawling infrastructure. They know every site they hit is watching. They have compliance teams. Their crawlers are boring in the best way — they announce themselves, stay within limits, and leave.

The hostile minority

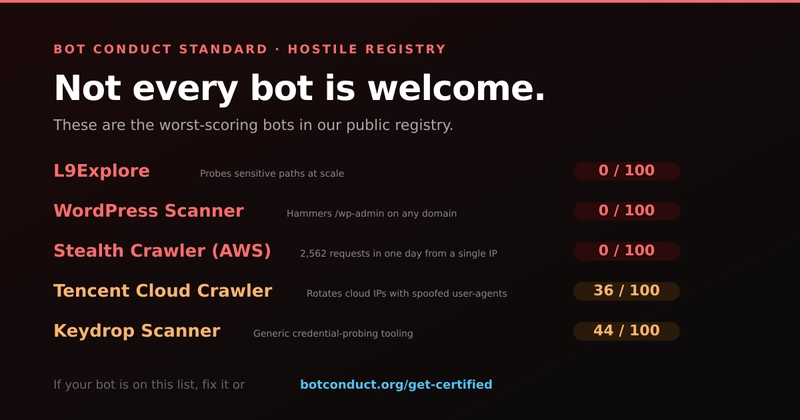

The bottom of the ranking was where it got interesting.

About 27% of bots scored below 50. A few of them were recognizable — L9Explore, the crawler operated by LeakIX, probing sensitive paths aggressively. Keydrop Scanner doing credential probing. A stream of anonymous WordPress scanners hammering /wp-admin on every domain they find.

The worst offender was a single IP on AWS that sent 2,562 requests in one day. No user-agent. No interest in robots.txt. Just walking through every endpoint it could find.

Another favorite: a bot presenting itself as iPhone; iPhone OS 13_2_3 — an iOS version from late 2019. Nobody real is running that in 2026. The user-agent is a lie and the behavior matches. Distributed across dozens of residential IPs.

The middle is the interesting part

The polar ends of the distribution are easy. Known good bots are good. Obvious scanners are obviously malicious.

The middle third is where real decisions live. Crawlers from cloud providers like Tencent sat around 36. Not malicious per se, but also not identifying themselves well and using rotating IPs. If I were running a site that mattered, would I let those through? Block? Rate-limit?

This is the category where block everything automated destroys legitimate use cases (partners, vendors, research tools) and allow everything destroys your servers. It's where the real work is.

What I built

I stopped logging and started building. The passive observations became an API — you send it a suspicious request, it sends you back a score and a recommended action.

The action is one of four: allow, throttle, challenge, block. Anything my middleware can handle in three lines.

verdict = bcs.score(

user_agent=request.headers["User-Agent"],

path=request.path,

headers=dict(request.headers),

)

if verdict["action"] == "block":

return 403

The rubric that produces the score is proprietary, but the verdicts are public. Every bot I scored shows up in a public registry with its current rating. Operators can claim their entries and upgrade to a cryptographically signed identity if they want higher trust.

What it changed for me

Before this experiment, I treated automated traffic as a nuisance. Something to filter, block, ignore.

After two weeks of looking closely, I think about it differently. The web is becoming a conversation between automated agents — and most of them are trying to do their jobs well. The bad ones are loud, and they get all the attention, but they are the minority.

Giving the well-behaved agents a way to prove it — and the sites a way to verify it — seems like a better answer than the status quo of blocking everything automated.

If you want to try it

- If you run a bot or agent: there is a public certification flow. It takes 30 seconds for basic certification, a few minutes for something more serious.

- If you run a site: the API has a free tier (5,000 scores per month) if you want to experiment.

Everything is at botconduct.org. The first production site running this end-to-end is importsignals.com — their bot policy page is a reasonable reference if you want to see what it looks like in the wild.

Would love to hear from other people who have measured their bot traffic seriously. I suspect the 27% hostile number is conservative.

Follow-up thread and registry updates at @botconduct.

Rafa Mizrahi

Top comments (0)